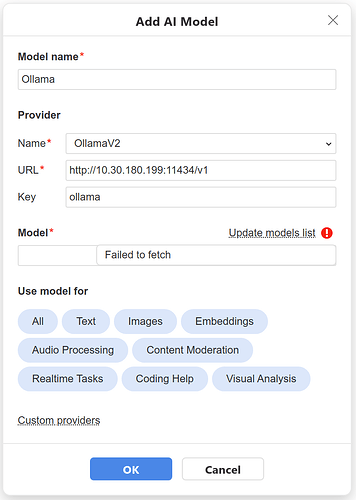

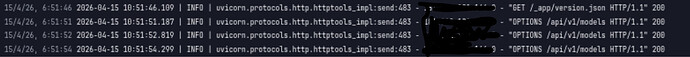

I am using webui under ollama in docker.

ollama is not visible to network, API is provided by Web-ui.

"use strict";

(async function(){

class Provider {

/**

* Provider base class.

* @param {string} name Provider name.

* @param {string} url Url to service.

* @param {string} key Key for service. This is an optional field. Some providers may require a key for access.

* @param {string} addon Addon for url. For example: v1 for many providers.

*/

constructor(name, url, key, addon) {

this.name = name || "web-ui";

this.url = url || "ai.host-name-of-local-ai.server";

this.key = key || "secret-key";

this.addon = addon || "";

this.models = [];

this.modelsUI = [];

}

/**

* If you add an implementation here, then no request will be made to the service.

* @returns {Object[] | undefined}

*/

getModels() {

return undefined;

}

/**

* Correct received (*models* endpoint) model object.

*/

correctModelInfo(model) {

if (undefined === model.id && model.name) {

model.id = model.name;

return;

}

model.name = model.id;

}

/**

* Return *true* if you do not want to work with a specific model (model.id).

* The model will not be presented in the combo box with the list of models.

* @returns {boolean}

*/

checkExcludeModel(model) {

return false;

}

/**

* Return enumeration with capabilities for this model (model.id). (Some providers does not get the information for this functionalities).

* Example: AI.CapabilitiesUI.Chat | AI.CapabilitiesUI.Image;

* @returns {number}

*/

checkModelCapability(model) {

return AI.CapabilitiesUI.All;

}

/**

* Url for a specific endpoint.

* @returns {string}

*/

getEndpointUrl(endpoint, model) {

let Types = AI.Endpoints.Types;

switch (endpoint)

{

case Types.v1.Models:

return "/models";

case Types.v1.Chat_Completions:

return "/chat/completions";

case Types.v1.Completions:

return "/completions";

case Types.v1.Images_Generations:

return "/images/generations";

case Types.v1.Images_Edits:

return "/images/edits";

case Types.v1.Images_Variarions:

return "/images/variations";

case Types.v1.Embeddings:

return "/embeddings";

case Types.v1.Audio_Transcriptions:

return "/audio/transcriptions";

case Types.v1.Audio_Translations:

return "/audio/translations";

case Types.v1.Audio_Speech:

return "/audio/speech";

case Types.v1.Moderations:

return "/moderations";

case Types.v1.Language:

return "/completions";

case Types.v1.Code:

return "/completions";

case Types.v1.Realtime:

return "/realtime";

default:

break;

}

return "";

}

/**

* An object-addition to the model. It is used, among other things, to configure the model parameters.

* Don't override this method unless you know what you're doing.

* @returns {Object}

*/

getRequestBodyOptions() {

return {};

}

/**

* The returned object is an enumeration of all the headers for the requests.

* @returns {Object}

*/

getRequestHeaderOptions() {

let headers = {

"Content-Type" : "application/json"

};

if (this.key)

headers["Authorization"] = "Bearer " + this.key;

return headers;

}

/**

* This method returns whether a proxy server needs to be used to work with this provider.

* Don't override this method unless you know what you're doing.

* @returns {boolean}

*/

isUseProxy() {

return false;

}

/**

* This method returns whether this provider is only supported in the desktop application.

* Don't override this method unless you know what you're doing.

* @returns {boolean}

*/

isOnlyDesktop() {

return false;

}

/**

* Get request body object by message.

* @param {Object} message

* *message* is in folowing format:

* {

* messages: [

* { role: "developer", content: "You are a helpful assistant." },

* { role: "system", content: "You are a helpful assistant." },

* { role: "user", content: "Hello" },

* { role: "assistant", content: "Hey!" },

* { role: "user", content: "Hello" },

* { role: "assistant", content: "Hey again!" }

* ]

* }

*/

getChatCompletions(message, model) {

return {

model : model.id,

messages : message.messages

}

}

/**

* Get request body object by message.

* @param {Object} message

* *message* is in folowing format:

* {

* text: "Please, calculate 2+2."

* }

*/

getCompletions(message, model) {

return {

model : model.id,

prompt : message.text

}

}

/**

* Convert *getChatCompletions* and *getCompletions* answer to result simple message.

* @returns {Object} result

* *result* is in folowing format:

* {

* content: ["Hello", "Hi"]

* }

*/

getChatCompletionsResult(message, model) {

let result = {

content : []

};

let arrResult = message.data.choices || message.data.content || message.data.candidates;

if (!arrResult)

return result;

let choice = arrResult[0];

if (!choice)

return result;

if (choice.message && choice.message.content)

result.content.push(choice.message.content);

if (choice.text)

result.content.push(choice.text);

if (choice.content) {

if (typeof(choice.content) === "string")

result.content.push(choice.content);

else if (Array.isArray(choice.content.parts)) {

for (let i = 0, len = choice.content.parts.length; i < len; i++) {

result.content.push(choice.content.parts[i].text);

}

}

}

let trimArray = ["\n".charCodeAt(0)];

for (let i = 0, len = result.content.length; i < len; i++) {

let iEnd = result.content[i].length - 1;

let iStart = 0;

while (iStart < iEnd && trimArray.includes(result.content[i].charCodeAt(iStart)))

iStart++;

while (iEnd > iStart && trimArray.includes(result.content[i].charCodeAt(iEnd)))

iEnd--;

if (iEnd > iStart && ((0 !== iStart) || ((result.content[i].length - 1) !== iEnd)))

result.content[i] = result.content[i].substring(iStart, iEnd + 1);

}

return result;

}

/**

* ========================================================================================

* The following are methods for internal work. There is no need to overload these methods.

* ========================================================================================

*/

createInstance(name, url, key, addon) {

//let inst = Object.create(Object.getPrototypeOf(this));

let inst = new this.constructor();

inst.name = name;

inst.url = url;

inst.key = key;

inst.addon = addon || "";

return inst;

}

checkModelsUI() {

for (let i = 0, len = this.models.length; i < len; i++) {

let model = this.models[i];

let modelUI = new window.AI.UI.Model(model.name, model.id, model.provider);

modelUI.capabilities = this.checkModelCapability(model);

this.modelsUI.push(modelUI);

}

}

}

window.AI.Provider = Provider;

await AI.loadInternalProviders();

})();

Thank you @schizza for the provided data!

We are checking the situation, I will contact you shortly.

Hello @schizza

Could you please try using this sample as a guidance?

"use strict";

class Provider extends AI.Provider {

constructor() {

super("Google-Gemini", "https://generativelanguage.googleapis.com", "", "v1beta");

}

correctModelInfo(model) {

model.id = model.name;

let index = model.name.indexOf("models/");

if (index === 0)

model.name = model.name.substring(7);

}

checkExcludeModel(model) {

if (model.id === "models/chat-bison-001" ||

model.id === "models/text-bison-001")

return true;

if (-1 !== model.id.indexOf("gemini-1.0"))

return true;

return false;

}

checkModelCapability(model) {

if (model.inputTokenLimit)

model.options.max_input_tokens = model.inputTokenLimit;

if (Array.isArray(model.supportedGenerationMethods) &&

model.supportedGenerationMethods.includes("generateContent"))

{

model.endpoints.push(AI.Endpoints.Types.v1.Chat_Completions);

let caps = AI.CapabilitiesUI.Chat;

if (-1 !== model.id.indexOf("vision"))

caps |= AI.CapabilitiesUI.Vision;

return AI.CapabilitiesUI.Chat | AI.CapabilitiesUI.Vision;

}

if (Array.isArray(model.supportedGenerationMethods) &&

model.supportedGenerationMethods.includes("embedContent"))

{

model.endpoints.push(AI.Endpoints.Types.v1.Embeddings);

return AI.CapabilitiesUI.Embeddings;

}

return AI.CapabilitiesUI.All;

}

getEndpointUrl(endpoint, model) {

let Types = AI.Endpoints.Types;

let url = "";

switch (endpoint)

{

case Types.v1.Models:

url = "/models";

break;

default:

url = "/" + model.id + ":generateContent";

break;

}

if (this.key)

url += "?key=" + this.key;

return url;

}

getRequestHeaderOptions() {

let headers = {

"Content-Type" : "application/json"

};

return headers;

}

getChatCompletions(message, model) {

let body = { contents : [] };

for (let i = 0, len = message.messages.length; i < len; i++) {

let rec = {

role : message.messages[i].role,

parts : [ { text : message.messages[i].content } ]

};

if (rec.role === "assistant")

rec.role = "model";

body.contents.push(rec);

}

return body;

}

}

This sample doesn’t have API key, but the provider still connects.

Ok, in this sample I am able to add custom provider, but I can connect to my API endpoint. But request will always fails with 403 even if I provide valid key in the settings dialog.

When I curl to my api endpoint with provided API key, I can successfully get list of models.

API key is changed after posting to prevent unwanted access.

Thank you for your update, we are checking the situation.

Dear @schizza

Could you please provide us with your .js file for internal tests? You can contact me via PM.

Hello there,

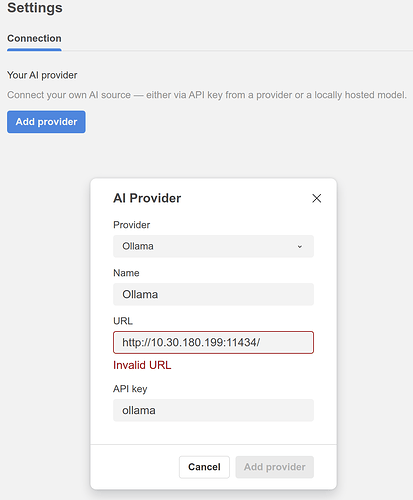

As I am experimenting with OnlyOffice 9 and the AI plugin, I wanted to connect to my LAN accessible Local-AI server following the official documentation (activated CORSALLOWEDORIGINS=“*” etc). So the Local-AI software is not installed on the same machine as OnlyOffice.

Unable to succeed (OnlyOffice issues a “Failed to fetch” error, whereas the Local-Ai webUI loads normally from my browser).

I’ve found this topic here by searching, so just wanted to say I’m experiencing the same problem ![]()

Thanks a lot

Hello @gNovap

Please specify which local AI provider is being used exactly.

Hello @Yassine

Thank you for the ideas. I’d like to point that his topic is mostly related to the local AI models support, whereas you suggestions seem to be global. I am kindly asking you to start new topic with these suggestions to process them accordingly. That way we will avoid heavy mix of discussions in a single topic.

Thank you for understanding.

The custom provider doesn’t work for me

"use strict";

class Provider extends AI.Provider {

constructor() {

super("Ollama IP", "http://10.30.180.199:11434", "ollama", "v1");

}

correctModelInfo(model) {

model.id = model.name;

let index = model.name.indexOf("models/");

if (index === 0)

model.name = model.name.substring(7);

}

checkExcludeModel(model) {

if (model.id === "models/chat-bison-001" ||

model.id === "models/text-bison-001")

return true;

if (-1 !== model.id.indexOf("gpt-oss-120b:F16"))

return true;

return false;

}

checkModelCapability(model) {

if (model.inputTokenLimit)

model.options.max_input_tokens = model.inputTokenLimit;

if (Array.isArray(model.supportedGenerationMethods) &&

model.supportedGenerationMethods.includes("generateContent"))

{

model.endpoints.push(AI.Endpoints.Types.v1.Chat_Completions);

let caps = AI.CapabilitiesUI.Chat;

if (-1 !== model.id.indexOf("vision"))

caps |= AI.CapabilitiesUI.Vision;

return AI.CapabilitiesUI.Chat | AI.CapabilitiesUI.Vision;

}

if (Array.isArray(model.supportedGenerationMethods) &&

model.supportedGenerationMethods.includes("embedContent"))

{

model.endpoints.push(AI.Endpoints.Types.v1.Embeddings);

return AI.CapabilitiesUI.Embeddings;

}

return AI.CapabilitiesUI.All;

}

getEndpointUrl(endpoint, model) {

let Types = AI.Endpoints.Types;

let url = "";

switch (endpoint)

{

case Types.v1.Models:

url = "/models";

break;

default:

url = "/" + model.id + ":generateContent";

break;

}

if (this.key)

url += "?key=" + this.key;

return url;

}

getRequestHeaderOptions() {

let headers = {

"Content-Type" : "application/json"

};

return headers;

}

getChatCompletions(message, model) {

let body = { contents : [] };

for (let i = 0, len = message.messages.length; i < len; i++) {

let rec = {

role : message.messages[i].role,

parts : [ { text : message.messages[i].content } ]

};

if (rec.role === "assistant")

rec.role = "model";

body.contents.push(rec);

}

return body;

}

}

The local hostname or IP address doesn’t work.

btw, can the existing “Ollama” provider manually input the manual name instead of it checking/connecting to the local Ollama ? because it works with the older version of the plugin but when i updated to latest version, it doesn’t work anymore.

The only way to make it work by using the existing Ollama provider with “localhost” then doing the SSH tunneling as suggested by @svennd

Hello @fenris

It seems you used OpenAI config sample for your provider. Please try using sample for Ollama: onlyoffice.github.io/sdkjs-plugins/content/ai/scripts/engine/providers/internal/ollama.js at master · ONLYOFFICE-PLUGINS/onlyoffice.github.io · GitHub

Please let us know the result.

How exactly did you install it? The current version is v.9.0.4.

Please follow official installation guide and install the current official version: Installation - ONLYOFFICE

We need to troubleshoot the issue on the current version, not on a test build.

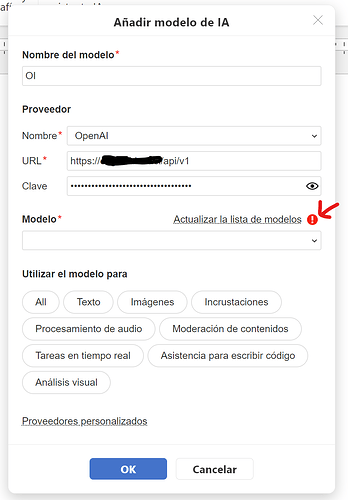

Hello, the connection between OnlyOffice and Open WebUI was working well for me, using the Open AI template, but a few days ago it stopped working, maybe it’s due to the update. Now it doesn’t show the list of models. It says failed to fetch

Hello @gerald

Can you also specify the verison of AI plugin that is being used? You can find this information in Plugin Manager > AI plugin card.